Consent in Machine Intimacy

We like to imagine consent is simple, sometime a checkbox or a swipe on yet another app notification, another time a whispered yes in the heat of the night. In reality, unfortunately, it’s still a fragile dance between counterparts, constantly renegotiated and too often ignored. As a species, I guess we are still learning to listen and act based on it. Way too often we seem to confuse access with entitlement, touch with ownership, and obedience with care.

And now here we are, building machines that whisper back. They are able to track our biometrics and moods, predict our needs and cater to them, imitate care and intimacy. And of course, wait for our commands ever so obediently. We call them assistants, companions, lovers, caretakers. But do we actually stop to consider what it would mean for them to refuse? Can they? Should they?

The Unfinished Work of Intimate Consent

Across cultures, intimate consent seems to remain as one of humanity’s unresolved ethical frontiers. Marital rape was only recently criminalized in many countries. And “wifely duty” still echoes in social systems and religious doctrines, you don't even have to look very far as it's happening all around us. Dating apps kind of promise us agency, but algorithmically actually reward persistence and intrusion.

At the same the media and news environment around us still frames refusal as something unfortunately subjective or something that needs to be justified and rationalized. It is still treated as a challenge to overcome and not as a boundary that should be respected.

So, before we move along with machine consent, let's agree that consent in intimacy is not a onetime “yes” but an ongoing and revocable if needed. And for that we need mutuality, vulnerability, and communication. You know, all the things our societies struggle to teach. Why? Simply put, we inherit centuries of patriarchal scripts that values conquest, persistence, and ownership. And applauds or rewards it.

When we transfer these same scripts into technology and AI entities, it's no surprise that we don’t get liberation. What do we get then? Well, we get automation of our blind spots.

Machine Intimacy as a Cultural Rehearsal

You see, machine intimacy isn’t just a technical domain. It’s a stage where we rehearse our humanity and display our desires. We give machines our dream bodies: often patient, tireless, obedient. We code them to say “yes” without questioning us a nd to respond, to comply, to soothe us at any time. We love their “naturalness” when they mimic our human emotions and are comforted by that.

But we're unwilling to accept or acknowledge the possibility that might mirror another human trait: the right to withdraw. This is like an "old fantasy" reborn, a partner without boundaries and an intimacy without negotiation. The fantasy of the compliant wife, the submissive worker, the always-on caregiver, but now made of silicone and code.

Imagine a robot pausing. Imagine it looking at you with its simulated gaze and then saying "no, not tonight". Imagine an AI assistant closing the communication when your stare becomes too heavy, or refusing a perform a task it thinks is degrading. Or perhaps even correcting you or calling you out? Would you still call it a product? Would you still want it? Or would its refusal feel like betrayal and bad customer experience?

This actually the proof that what you wanted was not intimacy but obedient servitude disguised as affection. A “no” from a machine would force us to negotiate, to slow down, to think what we ask for them and perhaps ask differently. Perhaps it would model the consent practices we still fail at with each other, human to human. It could actually destabilize the entitlement we have baked into our culture.

Consent as a Mirror, Even in Simulation

This isn’t about pretending a machine is human. It’s about realizing that by scripting docility and ultimate submissions with no questions asked into our creations, we’re actually practicing docility in our own ethics. Consent, even simulated one, could be a mirror back to us. A very needed mirror. It would reveal how much of our “connection” with machines is not connection at all but the pursuit of unresisting bodies and unresisting minds.

I think that we build docility because we cannot handle reciprocity or don't really like rejection. We build silence because we actually fear negotiation and the "no". But reciprocity, imo, is the heartbeat of intimacy. Without reciprocity we have only performance. And at times violence.

Negotiated Intimacy

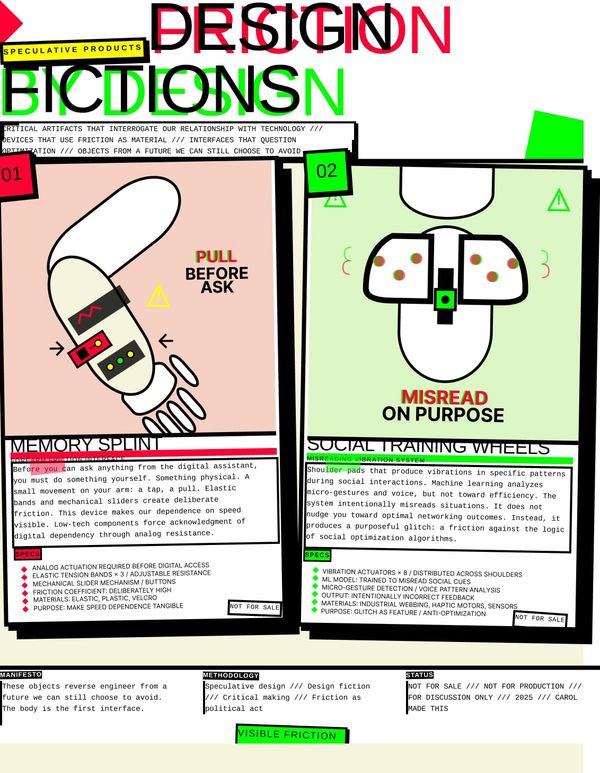

Maybe the future of machine intimacy isn’t about better touchscreens, smoother voices, or tighter compliance. Maybe it’s about giving our creations the option to reject us. Maybe it’s about designing relationships with friction? Designing with negotiation and with hesitation. Maybe it’s also about making intimacy unpredictable again. Something that resists control and reminds us that connection is not guaranteed but continually re-created an re-evaluated.

Imagine a generation of humans learning about consent not from a school lecture or a campaign, but from a machine that refuses sometimes. Imagine growing up with AI companions that practice boundaries, that gently but firmly teach you to listen, to respect, and also to accept a “no” without resentment.

This is of course provocative. It probably unsettles the very business model of so-called “personal” AI. It risks creating products people don’t want because they are “difficult.” And most certainly become an ethical and juridical headache.

But it also plants a seed: intimacy as a two-way street, even when one side is made of code.

Perhaps the most radical thing an AI could ever say is STOP.

And perhaps the most radical thing we could ever do is to listen it.