From Adorable to Alarming

In a world full of cool gadgets, fun toys, and realistic robots, it’s relatively common to feel a bond with stuff that isn’t actually alive. Whether it's a service robot's expressive face or a cute domestic device, there's something fascinating about inanimate objects that appear to "come alive." Not only do these designs serve a practical and functional purpose, but they also nudge us to connect, merging the practical bits with the playful pieces in our daily lives.

By appealing to our natural tendency to give things human features, anthropomorphism is a phenomenon that's making it hard to tell what's personal and what's just an object. Soft, rounded shapes and friendly “faces” on digital assistants really help make things feel more approachable and even trustworthy. But, you know, this method can be a little tricky.

So, when we make our tech feel a bit more "human," or a lot, are we changing the way we connect with machines, or does it really just help us be more productive and enjoy ourselves more? Let’s take a casual and semi-academic dive into the highs and lows of cuteness in design and see how it bridges functionality and emotion in modern tech. Let us explore the psychological, practical, and ethical aspects of anthropomorphism in technology and how it is influencing how we connect with inanimate objects.

Faces in the Clouds, Faces in Tech

We step into a fascinating world where psychology, design, and technology come together as we explore how people interact with non-human entities like smart machines, social robots, virtual assistants, and other exciting ideas. These days, it feels like many modern devices have moved beyond just being tools or gadgets. They’ve become more like “characters” that we interact and bond with, rather than just objects we use. The human-like responses from digital assistants and the expressive eyes of robots allow them to form genuine human-computer relationships. And humans? Well, it seems we're more than happy to welcome these high-tech 'friends' into our lives, one charming beep and friendly face at a time.

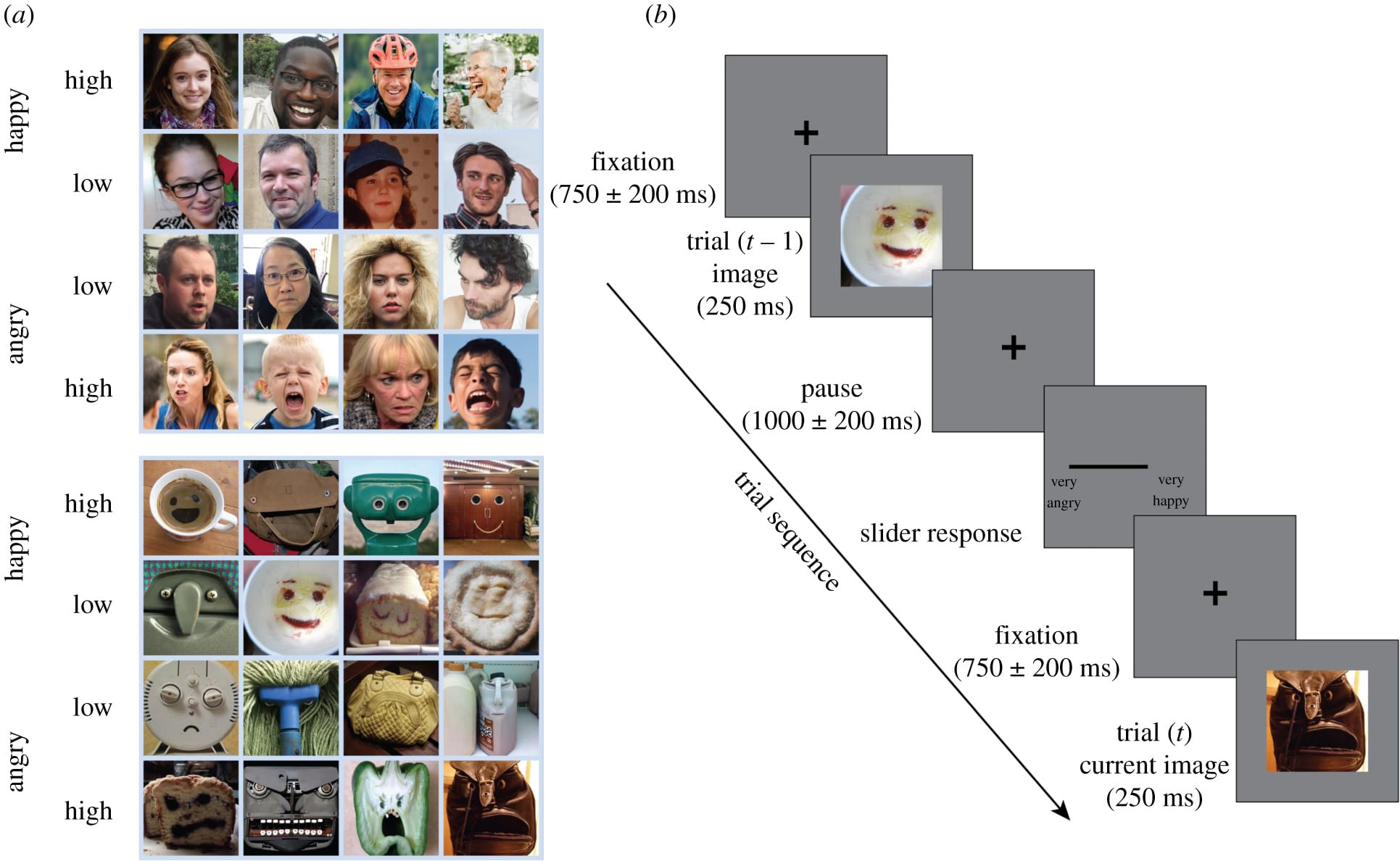

In these interactions, we naturally find ourselves seeing faces, interpreting emotions, and assigning personalities, even when there’s nothing there at all. This tendency has been around for a while; folks have always liked to give their surroundings a personal touch, naming their boats or seeing faces in the clouds or sun. The study on human faces and face pareidolia (illusory faces perceived in objects) unveiled that the brain employs the same mechanisms to interpret emotions in illusory faces, such as a smiley cloud, as it does for real human faces. They took a closer look and explored it a bit more and it's interesting to note that this isn't just something us humans experience! They noted that rhesus monkeys experience this too, showing that it's a trait common to all primates. Though occasionally it results in some misconceptions, it looks like evolution has really helped humans to become skilled at spotting emotional signs. At least the basic ones that are pretty clear.

The system definitely seems to have its limits. It does a solid job at picking up on basic feelings like "happy" or "angry," but it has a tough time with the more subtle stuff, like a shy smile or a bored expression. It's the same deal with non-human counterparts—despite all the research, they still struggle with reading human expressions and showing the right ones for the situation. All in all, it seems that we really need to dig into how emotional tone, attention, and context affect how we see and understand both real and fake faces. Both for understanding and designing for human-to-non-human interaction.

It's pretty interesting how we naturally tend to spot faces and emotions in objects, which is why we often end up giving technology a human touch. This tendency makes modern devices feel more intentional and engaging. Devices today chat with us using voice, expressions, and gestures, making them seem more like companions than just gadgets. So, let's dive into why and how these interactions are designed, checking out the psychological pull of connecting with non-humans and how it shapes our daily tech experiences.

Our Urge to Connect

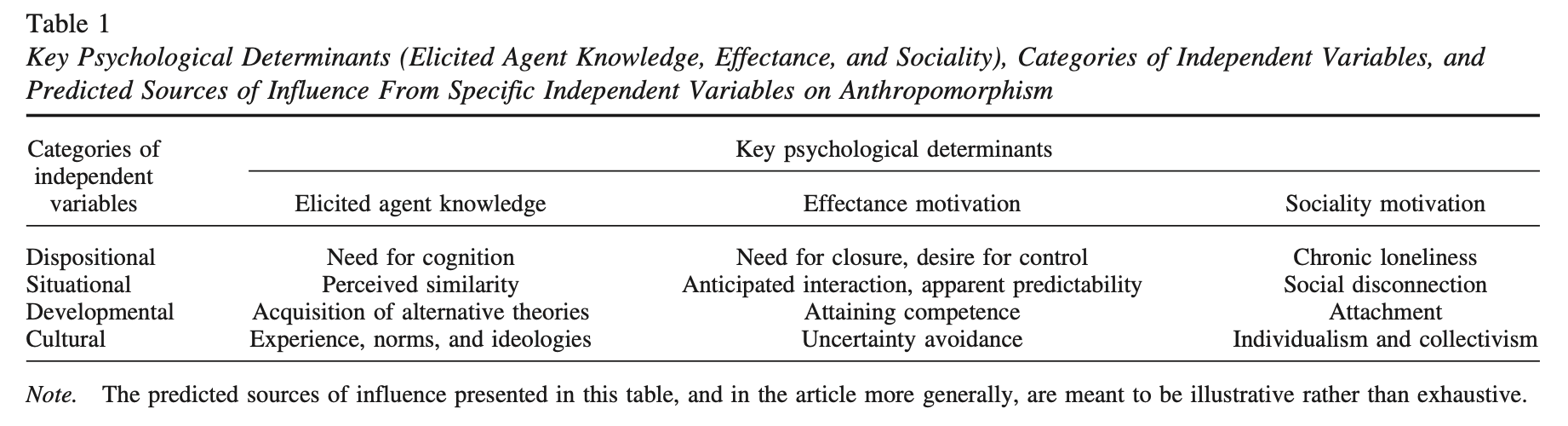

Research helps us understand why we tend to give human traits to non-human entities, providing some interesting insights into this common behaviour. One study by Nicholas Epley, Adam Waytz, and John T. Cacioppo dives into the psychological reasons behind this—like when you think your pet has a personality or when you imagine a robot feeling emotions.

In their paper, "On Seeing Human: A Three-Factor Theory of Anthropomorphism," they identified three key factors:

- Elicited agent knowledge - how easily we apply human-like understanding to something.

- Effectance motivation - our desire to make sense of others' behavior.

- Sociality motivation - our need for social connection.

The researchers looked into these factors to figure out when and why we tend to see human traits in non-human things. See the table below from their paper for a detailed breakdown of these influences.

Their research looked into how these factors mix with personal traits, surroundings, life stages, and cultural vibes to shape anthropomorphism, making it something that's pretty common but also varies a lot. These factors interact with one another to help explain why anthropomorphism happens so frequently and why it varies from person to person and from context to context.

The authors pointed out that even though there aren't a lot of focused studies on anthropomorphic beliefs, we get a lot of valuable insights from studies about how people view other humans. Anthropomorphism in that sense is all about applying the same thought processes we use when interacting with people to give human traits to nonhuman beings. This overlap highlights that looking into how people view other humans can give us insights into why and when they start seeing nonhuman things as having human traits.

In the study "The Development of Anthropomorphism in Interaction: Intersubjectivity, Imagination, and Theory of Mind," Gabriella Airenti looked into how kids tend to give human traits to animals, objects, and even natural events as they grow up. This is connected to how they're growing their ability to share experiences and get where others are coming from, along with their imagination. As kids get older, they start to get better at understanding that other people have their own thoughts and feelings, which affects how they see and relate to things like animals or objects as if they have human traits.

The study highlights that anthropomorphism varies across cultures and situations, and it’s quite important in shaping our social thinking as humans. According to this theory, anthropomorphism occurs through interactions, which helps us understand why individuals ascribe human characteristics to other animals. This illustrates its importance in social and developmental circumstances.

These studies, along with many others, offer insights that go beyond just anthropomorphism by connecting it to broader aspects of human behaviour. They shed light on why we tend to make things personal, or why storytelling is all about characters we can relate to, and even why we get attached to our gadgets. When we understand how anthropomorphic thinking works, we start to really see our natural desire to find meaning and connection, whether it's with people, animals, objects, or even things we imagine. And in that sense, our urge to connect with non-human agents seems quite clear.

Making Tech Feel Alive

Creating and designing technology that feels (more) human isn’t only about how it looks, it’s a strategic approach to build trust, improve usability, and nurture emotional connections. And sell things.Anthropomorphism really shapes how much we want to interact with devices and the way we do it. By incorporating human-like behaviours or appearances, designers make technology more inviting and engaging, changing how the devices are viewed, from simple tools to approachable companions that meet personal needs beyond their practical functions.

The Benefits: Trust, Ease of Use, and Emotional Engagement

Anthropomorphic technology has the potential to foster trust, ease of use, and emotional connection. For example, welcoming tones of voice assistants help foster trust and comfort. Those who might be scared of technology will especially benefit from this since it will help them to adopt and use such solutions. Likewise, robots meant to support elderly people or therapy bots responding conscientiously can help reduce loneliness and advance mental health. Not only does this approach help people, but it also makes their experience better by simulating human-like traits. This creates a stronger bond between humans and the technology they are using.

In the article, "Anthropomorphism in Human–Robot Interactions: A Multidimensional Conceptualisation," Kühne and Peter explore the underlying mechanisms that makes these benefits possible. They claim that in human–robot interaction, anthropomorphism has several facets rather than a single concept. Inspired by Wellman's Theory-of- Mind approach, they pinpoint five key components influencing human-robot perception:

- Thinking - attributing cognitive abilities to robots.

- Feeling - perceiving robots as capable of experiencing emotions.

- Perceiving - believing robots can process sensory information.

- Desiring - assuming robots have goals or desires.

- Choosing - viewing robots as capable of making decisions.

These aspects underline the complexity of anthropomorphism and its capacity to affect user interactions.

The writers also make a distinction between what leads to anthropomorphism and what happens as a result of it. Design aspects like the form and movement of a robot can inspire users to attach human-like traits. On the flip side, when robots are given human-like traits, people might start to assign them personalities or values, which can enhance how they connect and interact with them both emotionally and functionally. This process, in the meantime, also presents potential challenges including over-reliance on or mistaken trust in these technologies.

Understanding these dimensions and what they mean is essential for creating human–robot interactions that work well and are ethically responsible. By bringing together these insights with real-world applications, anthropomorphic technologies can enhance their benefits while also dealing with any potential risks.

The Drawbacks: Unrealistic Expectations and Ethical Dilemmas

Anthropomorphism has its advantages but it also comes with some challenges. For example, friendly robots could create unrealistic expectations, causing people to become overly dependent or misunderstand what they can actually do. For example, if people start putting too much emotional or mental weight on AI therapy robots or bots, it could possibly lead to some disappointment or even misuse. That could happen since they might believe that the bots’ responses are similar to genuine human empathy or what a licensed therapist would advise.

Also, there are some ethical issues that come up when people get emotionally attached to devices that are really just machines. This brings up questions about manipulation, transparency, and how these relationships affect us psychologically. And when harm is done, who can be held accountable?

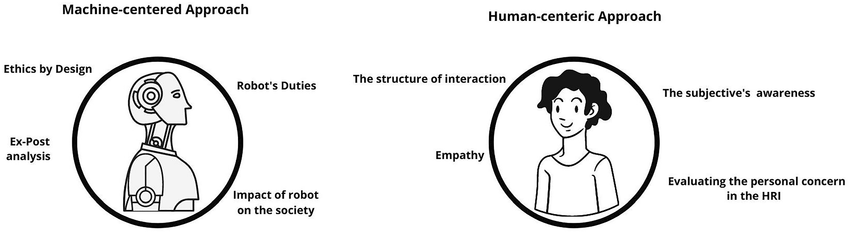

For instance, the article "Artificial Emotions: Towards a Human-Centric Ethics" by Laura Corti, Nicola Di Stefano, and Marta Bertolaso, explores the ethical implications of incorporating artificial emotions into robotic systems. The authors suggest an approach that focuses on human values to steer the development and use of emotionally responsive robots.

The article talks about how robots with artificial emotions influence the way we interact with them and offers some ethical guidelines that put human well-being first. It brings up important ethical questions, such as the authenticity of artificial emotions, the risk of manipulation, and how it might affect users psychologically. The authors emphasize the importance of being open about how robots show emotions to avoid misunderstandings and to keep trust in the relationships between humans and robots. It also highlights the importance of aligning the emotional capabilities of robots with human values and ethical standards to ensure a positive and safe integration into society.

Luisa Damiano and Paul Dumouchel's "Emotions in Relation: Epistemological and Ethical Scaffolding for Mixed Human-Robot Social Ecologies" investigated whether social robots (meant to engage emotionally) can actually coordinate emotions with humans. The writers proposed that rather than asking, "Can robots have emotions?" we should pay more attention to whether robots can emotionally interact with people successfully. Their conclusion is that, although lacking emotions like people or animals do, robots may engage in these interactions.

Robots nowadays have limited capacity for emotional expression and engagement. Still, inside these constraints, they can still engage people in emotional interactions providing unique benefits and insights. The authors suggest concentrating on "affective coordination"—that is, how people and robots emotionally communicate. They highlight that:

- Robots, even with their limitations, can have meaningful emotional exchanges with humans.

- These interactions, although different from those between humans or between humans and animals, can still be genuine and meaningful.

- Robots can be seen as either imitations of human emotional systems or as a new type of emotional partner.

- Thinking of robots as new emotional partners means we need to develop new ethical frameworks.

In the end, the authors suggest that we should view robots as emotional companions instead of deceptive tools. Seeing human-robot interactions as a new kind of emotional experience opens up exciting possibilities for building unique connections and making a positive impact on society, as long as we use them in a responsible and ethical way.

Balancing Connection and Consequences

So, what can we learn from all this? Our ability to see faces in clouds and attribute personalities to our gadgets is not just a fun quirk, it's a key aspect of how we engage with the world around us. Anthropomorphism combines usefulness with a sense of fun, turning our technology into more than just tools by becoming companions we can truly connect with.

While these connections can be quite delightful and beneficial, they also carry some important implications. When people attribute emotional awareness or moral responsibility to robots, it can sometimes result in overestimating what they can do or creating trust that isn't quite right. This can be especially challenging in important areas like caregiving or education, where genuine empathy and good moral judgement really matter. Or can cause harm. When we give machines human-like qualities, it can make things a bit confusing between what's real and what's not. This brings up some important questions about how we should handle these relationships moving forward.

This presents us with a fantastic opportunity as users and designers to build technology that is engaging, intuitive, and maybe even comforting. However, it also asks us to proceed with caution. How much "human" do we actually want in our machines? How can we find a way to balance our emotional connections with our ethical responsibilities?

At the end of the day, diving into anthropomorphic design goes beyond just creating things that are cute or friendly—it’s about discovering what it truly means to connect, both with one another and with the technologies that influence our lives. Whether it’s the warm voice of a virtual assistant or the expressive “eyes” of a robot, one thing’s for sure: our relationships with machines are becoming more personal, and there’s no turning back now. Let’s enjoy the quirks, the charm, and the potential while also being mindful of the ethical and societal impacts as we move forward.

As I wrap up this casual exploration, my hoover lets out a huff from the other side of the room by declaring “Mission complete, and once more, no thanks.” I roll my eyes and say, “Fine, great job buddy,” and it beeps back cheerfully while making its way to the dock. Most likely scheming its next passive-aggressive update. Created and enjoyed by me, of course.