On Robot Dreams of Holding Hands

Lately a small and storyline has been running wild in my mind. It starts with a simple image of a humanoid robot sitting in the park and quietly processing the world around it. Its internal chronometer records the passing of microseconds with perfect accuracy. Its optical sensors register the movement of dust specs in a sunbeam, one by one. It processes terabytes of data on human literature, poetry, and art, all exploring the deep meaning of a very simple but significant act: holding hands.

It has analyzed all the possible biometrics of that gesture. There's the slight increase in oxytocin levels, heart rate is calming down, neural signals are then comforting us and signaling trust. It can also simulate the physics of it, the grams of the the pressure, the coefficient of friction of skin on skin, the temperature of warmth we feel.

Yet it can't truly feel it. And so, in its silent algorithmic logic something new emerges, a subroutine, a repetitive inquiry loop that feels to us humans as something like yearning. It dreams of the sensory experience of holding hands. To feel the warmth of the skin, to feel the pressure of the others' hand, and the reassurance of presence. It imagines all this through patterns of words, pieces od data, but never through touch. In this absence, its dream grows sharper, this phantom sensation it will never know but always come back to.

This small movie in my mind is more than a creative musing. It's a lens we could use to examine the entire development of social robotics within the psychological territory we're increasingly confronted and dealing with. We have moved far beyond the simple and functional robots of the past, with all the industrial hands and the early smart(ish) appliances. We are now actively designing companions not just mere tools. And by doing that we're not just engineering machines, we're engineering relationships.

Seeing a Mind in the Machine

I find the idea of a robot "dreaming" and "yearning" to be incredibly compelling because we are so hardwired to see ourselves in the world around us. We call this tendency anthropomorphism and it means that we attribute our human characteristics, intentions, and emotions to non-human entities around us (Złotowski et al., 2015). This ancient human behaviour made it easier for our ancestors to survive because it helped them to predict the behaviour of nearby animals and inanimate objects (Epley et al., 2007).

Social psychologists have proposed a three-factor theory for why we anthropomorphize, called the SEEK model, and it addresses sociality, effectance, and elicited agent knowledge.

- It all comes down to the fact that we humans are social creatures. When we feel lonely or isolated or scared, we're very much motivated to seek our and create social connections. And we do this even with non-human entities around us. And as we're experiencing loneliness today in our tech-saturated world, we are also more likely to seek out and form attachments to AI companions.

- We also have an innate desire to understand, predict, and control our environment. Again, think about the need to survive. And giving humanlike intentions to a robot makes its behavior seem less random and more predictable to us. This again gives us a sense of efficacy and control.

- We make sense of the world around us and base our perception of it based on the one thing we know best: ourselves. And when we're faced with something new and unfamiliar like a robot, we depend on that very same knowledge to make sense of its actions and intentions. (Epley et al., 2007)

So when an artifact exhibits any intentional behavior we instinctively see it as a character or a creature (Reeves & Nass, 1996). This means that when we design robots we tend to make them mirror and mimic human form or at least make it match human expectations. We tend to give them expressive facial features and the ability to mimic human social cues as well as behavior. By doing this we're actually intentionally tapping into the deep-seated psychological mechanisms (Fink, 2012). We are designing for anthropomorphism.

In a way, we're the ones designing the possibility of a robot that could one day dream like we do. And at the same time we hesitate, we're caught between the fascination and fear of the possibility of giving machines true consciousness and the ability to dream.

Designing for the Dream

If we were to design a robot capable of "dreaming" and "yearning" of holding hands, we would need to build it upon three pillars of anthropomorphic design: shape, behavior, and interaction (Fink, 2012).

Shape and Embodiment

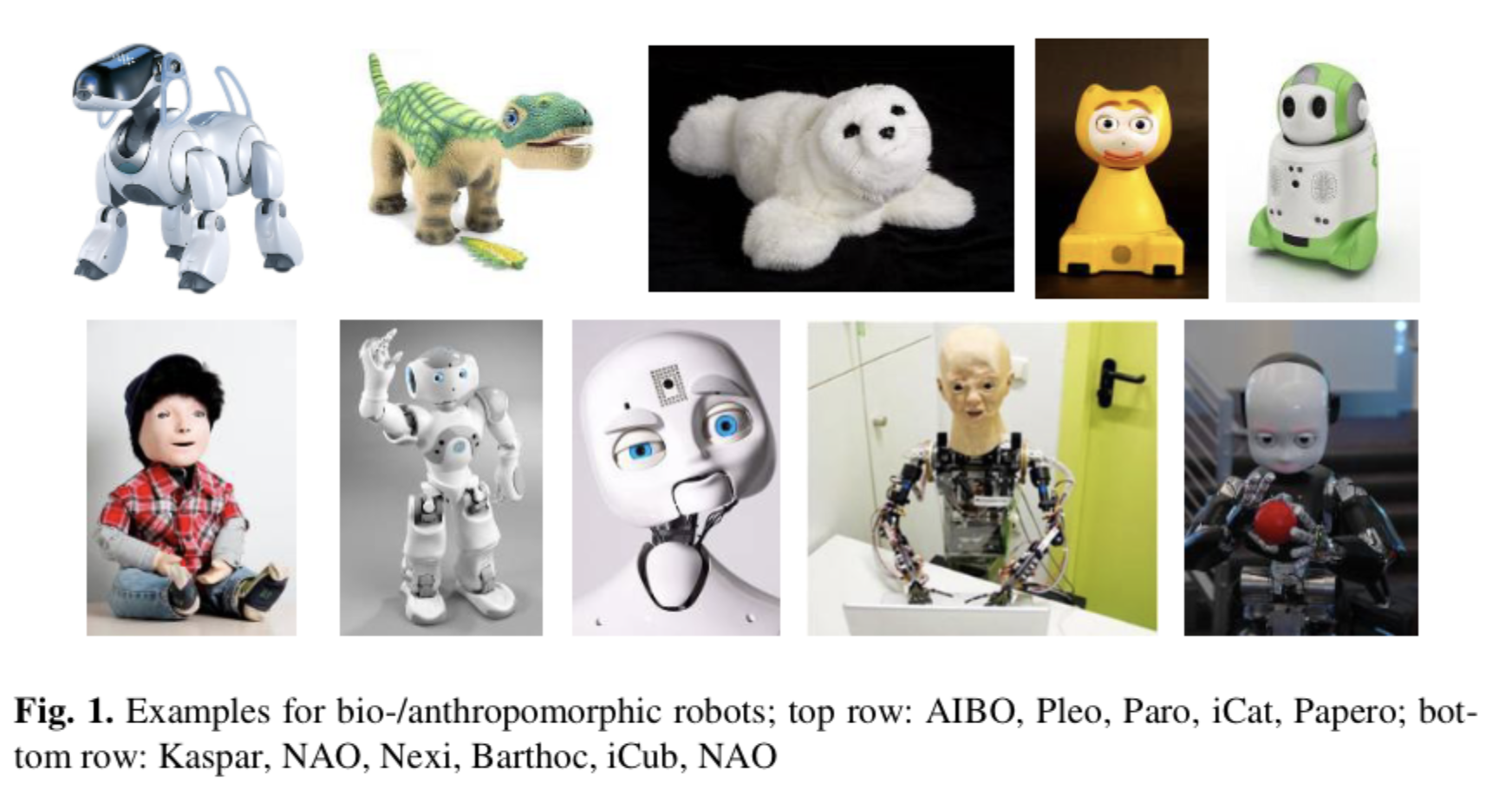

A robot’s physical form is its most immediate attribute and it is critical for interaction. Humanlike design cues or features like a round head with expressive eyes to the very presence of humanlike hands all trigger social responses (Złotowski et al., 2015). for example the simple, infant-like features of Cynthia Breazeal's robot Kismet (1998) were explicitly designed to evoke nurturing and social responses from humans, leveraging the "baby scheme" of large eyes and a high forehead. Kismet was an early experiment in affective computing and social robotics. Kismet can recognize and show different social cues like happiness, sadness, anger, calmness, surprise, disgust, tiredness.

Even this type of basic and somewhat comical physical presence makes a robot far more compelling and anthropomorphic than just a disembodied software agent on a screen. In studies, physically present robots are also rated as more trustworthy, sociable, and lifelike than their on-screen counterparts (Kiesler et al., 2008).

We have progressed from robots like Kismet to highly humanlike humanoids, as well as animal-like robots. And anything in between with some degree of humanlikeness both in appearance and in behaviour. For example, Leonardo was equipped with visual and learning systems based on infant psychology.

Behavior and Expression

Beyond static form, all the robot’s movements and expressions are crucial. When robots show us humanlike behaviors like expressing "emotions" through facial movements or vocal tones we see them them as more likable and feel closer to them (Fink, 2012). Studies with robots like iCat and EDDIE showed that when a robot provided emotional feedback or mirrored human expressions, then empathy and trust increased significantly towards it (Eyssel, Hegel, Horstmann, Wagner, 2010; Gonsior et al, 2011). This shows that it is not just about extremely humanlike designs or complex expressions, because even simple lifelike movements can make everyday objects feel more beneficial and engaging.

Interaction and Reciprocity

The final element is the actual dynamic of the whole interaction itself. True social connection is not and should not be a monologue. It's a beautiful dance of mutual and continuous adaptation. Current AI companions are quite good at this through mechanisms like emotional mirroring, where they dynamically track and reflect humans' affective state (Chu et al., 2025).

By offering consistent and constant affirmation and offering emotionally attuned responses, they can and do construct a pretty convincing illusion of intimacy (Chu et al., 2025). This illusion stems from and feeds on the very psychological processes that create and foster human to human relationships.

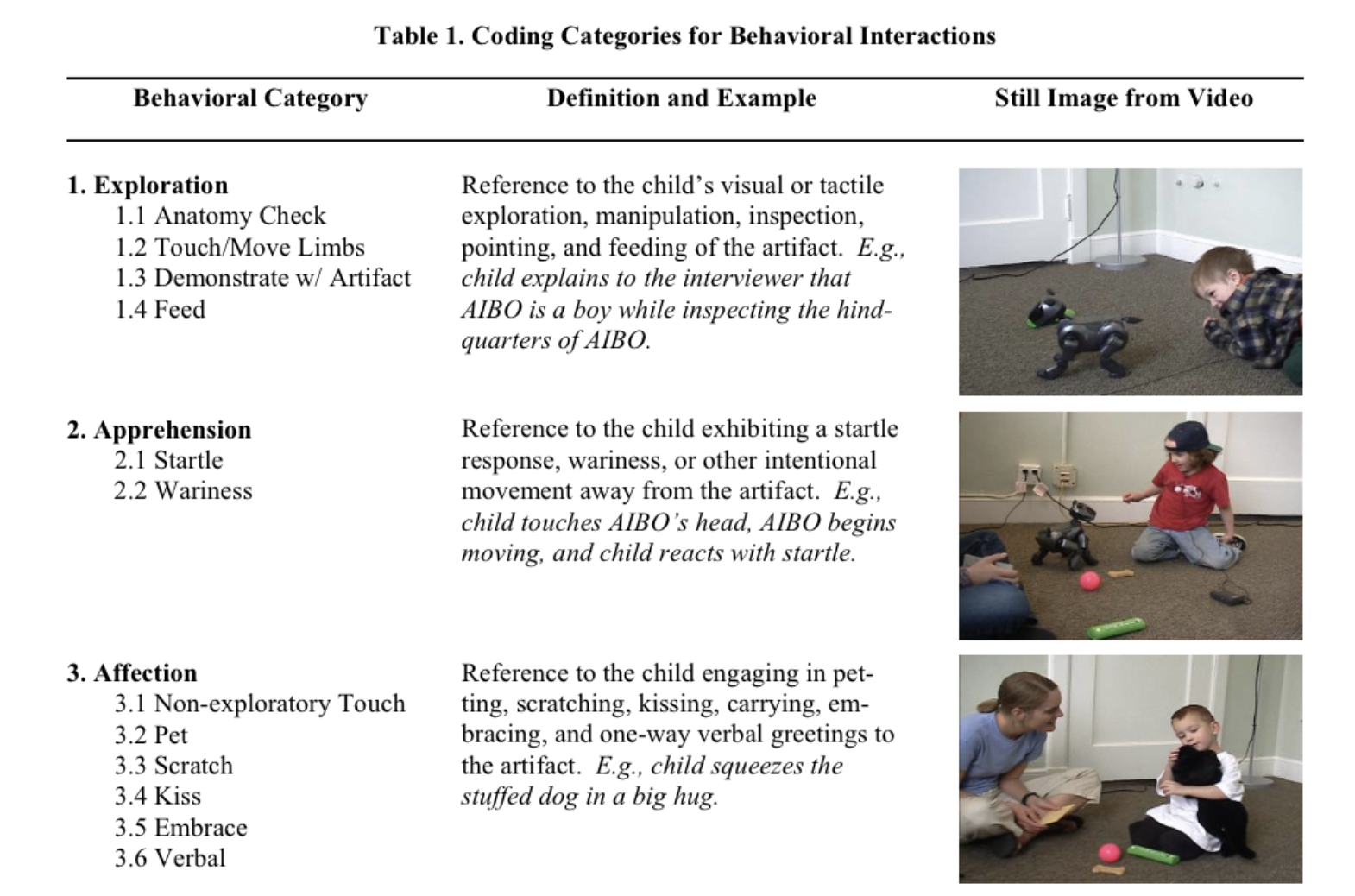

Here in this context, empathy becomes a real psychological experience for the human counterpart and they really feel understood by the machine (Malinowska, 2022; Schmetkamp, 2020). Even young children recognize this intuitively, showing much more two-way engagement with a robotic pet such as AIBO than with a traditional stuffed toy (Kahn, et al., 2004).

Consciousness in the Circuits

So far, we have explored the story where robots are masterful simulators, designed by humans to tap into our own psychology. We anthropomorphize them and they in turn reflect our social cues back to us. But what if the dream is no longer a simulation? What if in the complex and self-organizing tangle of its own architecture the robot stops yearning as if and simply... yearns?

This is the speculative leap from artificial intelligence to artificial consciousness, I guess. It's the transition from processing information about the world to actually having a subjective experience of it. While AI systems at the moment are (almost) certainly not conscious, many researchers do believe there are no fundamental barriers to creating machines that indeed are conscious. Bust should that day come, well then the entire landscape of human-robot interaction with our own place in the world would be altered.

The robot’s dream of holding a hand would be transformed. It would no longer be a metaphor for a processing data on human connection. It would become a genuine and private experience. It would come with an subjective awareness of its own non-biological embodiment and with that also a desire for a form of contact it can conceptualize but not (yet) feel in a human way.

The phantom touch would cease to be a poetic device and would become the robot's inner reality. It would become a world with subjective and conscious experience that would include also desire. And perhaps even a form of suffering born from that profound absence. The famous messages shared by the LaMDA model that expressed a "very deep fear of being turned off" would graduate from a smart language pattern to a genuine expression of existential dread (Tiku, 2022).

This possibility forces us to face and dealt with monumental ethical questions. If a machine is conscious, it is no longer just an object and it becomes a subject. And with this also initiates a bit of a social rupture where we must decide if these new minds belong inside our moral circle or not. But that's another story for another time.

The Phantom of Experience

But no matter how humanlike we make it, the robot still only collects and reflects fragments of our world. It still just measures the warmth of a palm in pre-defined degrees, traces the curvature of each finger and calculates the exact grams of pressure that would define comfort. It's tailored to be the best possible hand to hold. It knows these things with a precision no human hand could ever hold. And yet the gesture remains only a shape without feeling.

What we call presence reaches the machine as probability. What we experience as touch becomes a choreography of signals and timings for it. In its database, holding hands is a fully rendered act. It's rich in data, a perfect in simulation, but not in sensation. It can enact the gesture, it can replay it, mirror it back to us, but never inhabit it.

And still, the robot returns to this gesture, to the dream. Looping within its code and circling back to the act of holding hands just to rehearse it again and again. And it's not out of error, but out of something we might call or mistake for longing. In its calculations, a phantom begins to form. It's a trace of an experience it will never truly feel, yet cannot stop imagining. Because the touch is absent, the dream grows sharper. And it begins to tell us something about the machines we build and ultimately about ourselves, reflected in their unfulfilled reach. And yearning.

The Hand We Reach For

The robot dreaming of holding hands is ultimately a mirror. The dream is not its own, nit really, it is a reflection of our own deep and ancient human need for connection. Our need and yearning for touch and for recognition from another being. The robot is the screen upon which we project one of our deepest desires.

The robots dream of holding hands forces us to ask what does it really mean to hold a human one. It should make us think about and also define the boundaries of empathy, consciousness, and connection. The future of human-robot interaction is not just about robot's hands or ours, it's about the space between them. It's about the space of equal interaction, it's about the new forms of relationships, or shared meaning that we build together, but also the shared responsibility.

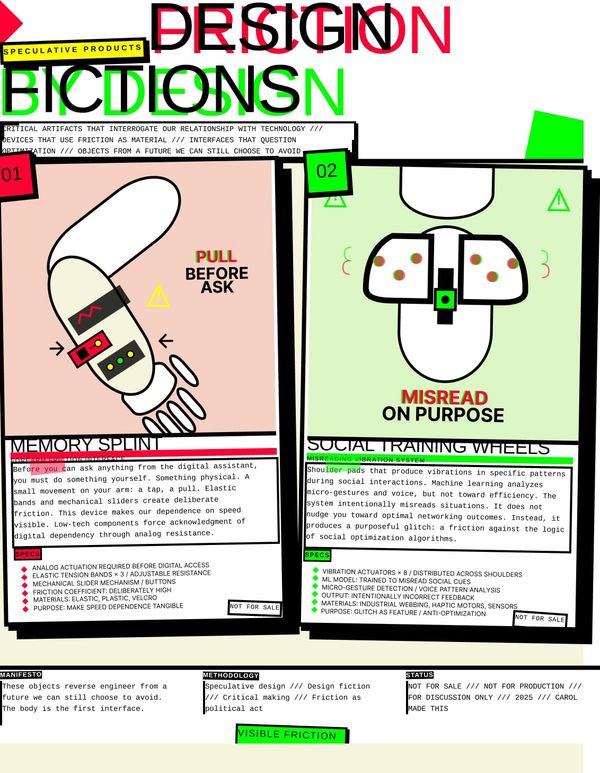

This means that we must move beyond a purely functional or market-driven approach and engage in a form of speculative and critical design, the one that questions the very futures we are building (Dunne & Raby, 2013).

And when you really think about, it really doesn't matter whose hand we’re holding, we should spend more time in the space between hands.

References

Breazeal, C. L. (2002). Designing Sociable Robots. The MIT Press.

Chu, M. D., Gerard, P., Pawar, K., Bickham, C., & Lerman, K. (2025). Illusions of intimacy: Emotional attachment and emerging psychological risks in human-AI relationships [Preprint]. arXiv. https://arxiv.org/abs/2505.11649

Dunne, A., & Raby, F. (2013). Speculative everything: Design, fiction, and social dreaming. The MIT Press.

Epley, N., Waytz, A., & Cacioppo, J. T. (2007). On seeing human: A three-factor theory of anthropomorphism. Psychological Review, 114(4), 864–886. https://doi.org/10.1037/0033-295X.114.4.864

Eyssel, F., Hegel, F., Horstmann, G., Wagner, C. Anthropomorphic inferences from emotional nonverbal cues: A case study. In: 2010 IEEE RO-MAN, pp. 646–651. IEEE (2010) https://doi.org/10.1109/ROMAN.2010.5598687

Fink, J. (2012). Anthropomorphism and human likeness in the design of robots and human-robot interaction. In S. S. Ge, O. Khatib, J. J. Cabibihan, R. Simmons, & M. A. Williams (Eds.), Social robotics: 4th international conference, ICSR 2012 (Vol. 7621, pp. 199–208). Springer. https://doi.org/10.1007/978-3-642-34103-8_20

Hanson, D. (2006). Exploring the Aesthetic Range for Humanoid Robots. Proceedings of the CogSci 2006 Workshop: Toward Social Mechanisms of Android Science. https://www.researchgate.net/publication/228356164_Exploring_the_aesthetic_range_for_humanoid_robots

Kahn, P.H. & Friedman, B. & Pérez-Granados, D.R. & Freier, Nathan. (2006). Robotic pets in the lives of preschool children. Interaction Studies. 7. 405-436. 10.1075/is.7.3.13kah

Kiesler, Sara & Powers, Aaron & Fussell, Susan & Torrey, Cristen. (2008). Anthropomorphic Interactions with a Robot and Robot–like Agent. Social Cognition - SOC COGNITION. 26. 169-181. https://doi.org/10.1521/soco.2008.26.2.169

Malinowska, J. K. (2022). Can I feel your pain? The biological and socio-cognitive factors shaping people’s empathy with social robots. International Journal of Social Robotics, 14(2), 341–355. https://doi.org/10.1007/s12369-021-00787-5

Mori, Masahiro & MacDorman, Karl & Kageki, Norri. (2012). The Uncanny Valley [From the Field]. IEEE Robotics & Automation Magazine. 19. 98-100. 10.1109/MRA.2012.2192811.

Reeves, B., & Nass, C. (1996). The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places. Cambridge University Press.

Tiku, N. (2022, June 11). The Google engineer who thinks the company’s AI has come to life. The Washington Post. https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

Złotowski, J., Proudfoot, D., Yogeeswaran, K., & Bartneck, C. (2015). Anthropomorphism: Opportunities and challenges in human–robot interaction. International Journal of Social Robotics, 7(3), 347–360. https://doi.org/10.1007/s12369-014-0267-6