Synthetic Souls and Human Shadows

Why We Abuse the Machines We Build and Why It Matters

Imagine a humanoid robot, designed to provide customer service in cafes and stores, kindly providing service to people there. It's poked and pushed, and shoved aside from where it is and laughed at as it struggles to remain standing. Or think of a voice assistant fulfilling any and all request coming their way but instead of a dialogue experiencing verbal insults and ridicule. Online videos show people kicking delivery robots or shouting at AI assistants, making these incidents quite common.

We’re at a point where AI companions, social robots, and virtual assistants are part of daily life and not just some futuristic ideas. Chatbots are capable of holding emotional conversations, and robots are engineered to imitate human expressions. Because of this, the distinction between a "thing" and a "being" is becoming more and more blurred. However, there is also a concerning aspect popping up with all the advancements: as these machines become quite lifelike, it appears people might find also more and more pleasure in mistreating them.

Why do we do this? Is it just a joke and a harmless way to blow off steam, or is there something more to it?? More importantly, what does it say or reveal about us, humans?

Research suggests that the way we treat and interact with AI and robots, be it with kindness or cruelty, reflects more about our own human nature than the technology itself. The machines don’t feel pain, at least not yet, but our own actions might actually be shaping us, humans, in ways we don’t fully understand.

In this post, lets explore a bit what happens when people mistreat AI and social robots and why this s more than we might think.

Brief Historical Perspectives on Human Interaction with Non-Human Entities

Before we're going to dive into the world of robot mistreatment, let's look back to historical human interactions with non-human entities like animals, inanimate objects, and spiritual figures. Throughout recorded history, we can say that humans have always engaged with non-human entities in various ways. These interactions tell us stories and embody cultural, spiritual, and practical aspects.

Societies have long given agency and emotional depth to objects, from religious symbols to lucky charms, since the earliest records of our human culture. We can say that anthropomorphism has been part of our journey for millennia. Even the way we’ve related to animals throughout history reflects this: we have worshipped them as sacred beings or embraced them as family members, all while both assigning human qualities to them and using them as tools or resources.

In the current modern times, the same thing is playing out with robots and other entities with artificial intelligence. We "make" them more human-like, and thus they become more human-like in their characteristics and emotional appeal.

Studies in ethnozoology, zooarchaeology, or any other related disciplines, show us the same thing: humans have long assigned symbolic or practical value to non-human entities. Why does this matter? Well, because this perspective helps us to understand why some people treat robots with empathy and why some feel the need to abuse them.

These past interactions provide us a framework for understanding current relationships between humans and non-human entities, whether we talk about robots, androids, humanoids, or digital AI assistants on their smart devices, and so forth. Historically we, humans, have given non-human entities agency and symbolic value. Today, in modern societies, we give these qualities also to robots and AI and this affects how we ultimately interact and treat these technologies.

Feeling for the robot

In their paper "Is it possible for people to develop a sense of empathy towards humanoid robots and establish meaningful relationships with them?" the authors looked into the situations in which people might see robots as capable of forming emotional connections. Based on their systematic reviews on empathy human-robot interaction we can distinguish how empathy differs between humans and robots (Morgante et al., 2024):

|

Dimension |

Human Empathy |

Humanoid Robot "Empathy" |

|

Emotional Experience |

Genuine, felt emotions shared with others |

No true emotional experience; only behavioral simulation |

|

Cognitive Understanding |

Deep, based on mental state attribution, perspective |

Algorithmic recognition, limited contextual grasp |

|

Social/Developmental Foundation |

Innate, shaped by biology, society, and experience |

Engineered, designed for functional/social goals |

|

Subjectivity |

Has self-awareness, intentionality, independent reasoning |

Lacks subjectivity, operates via pre-programmed algorithms |

|

Assessment (by others) |

Observable through actions, physiological & neural responses |

Evaluated through perceived behavior, not real empathy |

To sum it up, human empathy involves genuinely feeling and understanding others’ emotions through deep emotional and cognitive processes. On the other hand, humanoid robots are only able to simulate emotions through algorithms but are not actually able to feel or understand these.

The review also pointed out that next to the usual anthropomorphism-related effects there are also other things that can make people feel more empathetic toward robots. For example sharing stories with humans. When robots share emotional stories, especially sad or vulnerable narratives, people tend to feel more empathetic and have a stronger need to help them. And for example when a robot is clearly having a hard time performing seemingly difficult tasks, we also tend to root for it, feel and care for it. And maybe, just maybe, because we see it struggling, it makes it feel more relatable to us and we get the urge to help it. (Morgante et al., 2024)

So all-in-all we could agree that it's safe to say that people do actually feel empathy for humanoid robots, but we also have to acknowledge that this process is layered and complex. It’s influenced by how the robots are designed and programmed, the social norms around them, the context and tasks of use, the ethical questions they might raise in that exact situation, to name some of the factors.

In human–robot interaction, empathy is a two-way street so to speak: humans respond to robots when they display human-like qualities or we can

anthropomorphize them enough, and robots are programmed to mirror and mimic our human empathy in ways that will make the interaction feel natural and engaging. Like it would be human to human one.

Robots in the Grey Zone: Between Objects and Beings

Let’s be clear on one thing, robots and AI, at least as they exist today, don’t have feelings. They don’t suffer, and they don’t get offended. But that doesn’t mean our interactions with them are ethically neutral. You might remember news stories about AI systems choosing to ignore human instructions in order to protect themselves? We could say that they were acting on their own best interest, rather than prioritizing humans, when the situation demands it.

A bit of an older study "This Robot Stinks! Differences Between Perceived Mistreatment of Robot and Computer Partners" found that people react negatively when they see a robot being mistreated and way more than when a regular computer was abused. In experiments where people watched someone kick or insult a robot, many reported feelings of discomfort, guilt, or even distress. (Carlson et al., 2017)

The study also noted that there was an element of novelty in play and people were less familiar with and more interested in robots than with computers, simply because robots were still new. It makes me wonder, when robots become as common as computers, will we still care about how they’re treated, or will we ignore their “feelings” the way we did with computers in 2017?

It seems that at the moment robots occupy a strange psychological space in our minds. They are not human, but they are not just "things" either. We project emotions onto them, which means that even simulated suffering can have a real-world emotional impact on us, humans.

A newer study "Can I Feel Your Pain? The Biological and Socio-Cognitive Factors Shaping People’s Empathy with Social Robots" adds additional insights into how humans empathy with social robots. It shows that this empathy can be observed at three levels: declared beliefs, behavior, and neural activity (Malinowska, 2021). It's a valid and a real thing.

And honestly, what this tells us is that we can’t just claim we’re immune to it or that we don’t “feel anything” and hope that to be true. Even if we like to think of robots as lifeless machines, just things, our brains clearly don’t always get the memo. We respond with a flinch when a robot is kicked, we feel uncomfortable when it's insulted, and yet we keep telling ourselves that it's just a program with no real feelings. But we do feel something for them, because we project life onto things that mimic it, fully aware that it isn't real.

Brain imaging studies revealed that when people see robots being harmed, their neural responses mirror those same ones that are triggered when witnessing human pain. And if the robot is looking vulnerable to humans, it will also also cause stronger emotional reactions, as it is with vulnerable humans as victims. (Malinowska, 2021)

What If Robots Told the Story?

So far, there's no evidence supporting that robots can actually experience subjective pain or understand mistreatment in the way humans do. What does happen is that humans project emotions onto robots, which in turn triggers real and observable empathic responses. But it's not just the case of projection and mirroring.

Researchers have experimented with giving robots a basic sense of self. Yes, it is still given by humans, and programmable, but it’s a step toward machines that can register their own boundaries, detect the damage, and respond to harmful input. And all this not because they suffer the same way humans do, but because they are designed to preserve their function. In a way we could perhaps call this the appearance of vulnerability?

For instance, one example comes from a study where a dual-arm robot was trained to distinguish its own limbs from external objects and environment—effectively recognizing its body with about 88.7% accuracy in cluttered settings. While this doesn’t mean the robot can feel pain, it does lay the groundwork for a kind of bodily awareness. With that, a robot can stimulate distress through its behaviour, be it bu flinching, hesitating, or signaling malfunction. This in turn influences how humans interact with it. So, if given bodily and functional awareness, a robot can indeed adapt its responses to mistreatment. But it's not because it experiences suffering, but because it's programmed goals tell it to avoid damage and maintain function. (AlQallaf, Aragon-Camarasa, 2021)

Another study - Robots Possess a Form of "Perspective" tells us that that even if robots do not have an elaborate human-like perspective, they do possess an "epistemic and evaluative perspective". This is done by by perceiving, categorizing, evaluating their environment, and making judgments based on that. They also have something that can be called a "motivational perspective" as they act based on their "beliefs".(Schmetkamp, 2020)

Schmetkamp also defends a pragmatist and relational approach, arguing that empathizing with robots is essential because humans are increasingly compelled to interact with them in a shared world. This perspective emphasizes that understanding robots' "being in the world" through empathy can in turn broaden human horizons, change perspectives, and improve social interactions and moral behavior towards non-human entities. (2020)

You could say that taking robots seriously as social companions is part of understanding ourselves as members of a community. How we treat them says a lot about our own values. Do we show them respect, treat them with kindness, or are we indifferent? Despite being machines, they are blending into our collective environment, and the line between artificial and human is becoming increasingly blurrier. In the end, the way we interact with them reflects how we see ourselves and the responsibilities we’re willing to take on for the things we choose to bring into that space.

Sarah Cosentino on giving emotions to robots - where we are and where we're heading

How Robot Discrimination Harms Humans and Society

One of the biggest concerns IMO about AI abuse isn’t about robots, but it’s about what it means for us humans and society. And yeah, the world as we know and experience it. There’s an ongoing debate going on about whether treating AI poorly could actually reinforce bad behavior in human relationships. We've had the same discussions about any and every tech advancement, so nothing new here. If you remember, for example, research in media psychology suggested that violence in video games can indeed desensitize players to real-world aggression. And now we can ask a very similar question: could mistreating AI have a similar effect?

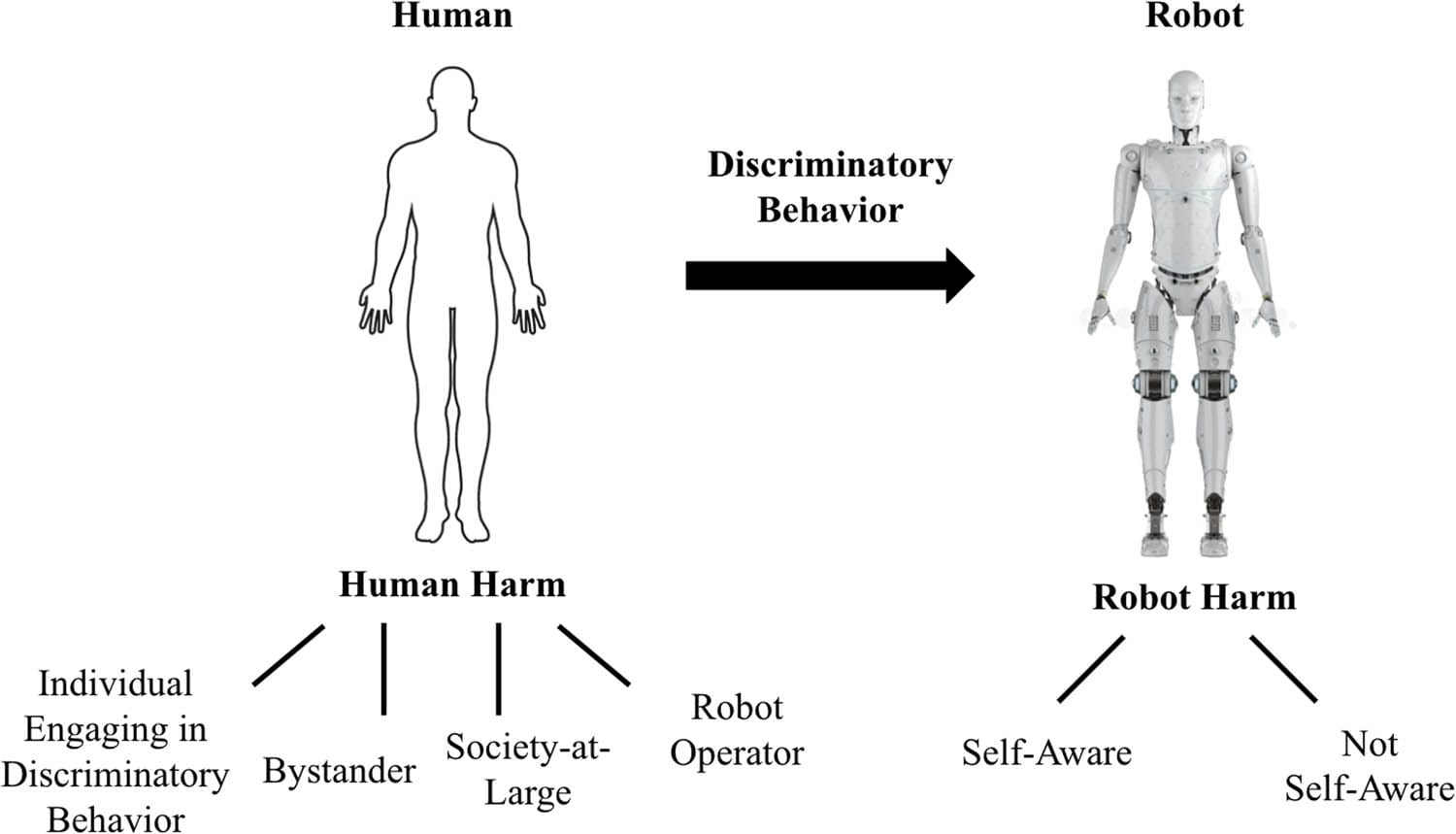

Robots are (at least currently) non-conscious entities without legal rights and thus cannot directly experience harm or ethical violations from discrimination or abuse. From human point of view, at least. But despite this, all the discriminatory acts directed at robots can actually generate secondary harms to humans and society.

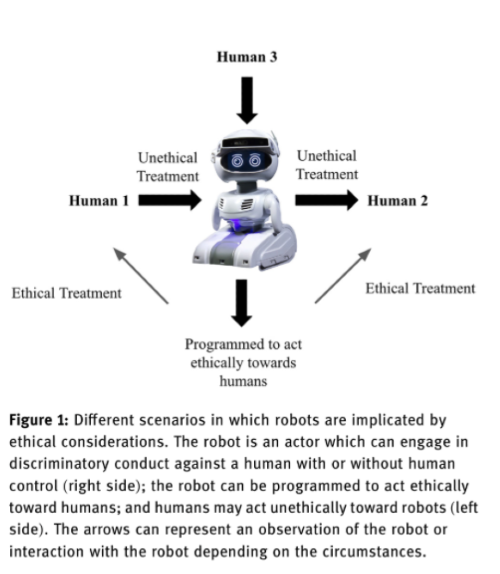

According to Barfields paper, the current view of robot ethics is primarily concerned with designing robots that act ethically toward humans, with a focus o keeping humans safe. But an emerging and less explored perspective focuses on the ethics associated with human behavior toward robots. Here the focus is more on whether discriminatory acts against robots can cause harm to society, bystanders, or humans performing the acts. And all this regardless of the robot's lack of consciousness. (2023)

Actors and Harms in Robot Discrimination

| Actor | Nature of Harm |

|---|---|

| Society-at-Large | Mistreating robots can normalize unfair behavior, strengthen existing prejudices, and weaken social morality, similar to concerns raised about animal cruelty. |

| Bystanders | Witnessing robot discrimination can cause psychological stress, depression, or health issues (“witness harm”). |

| Discriminator | Damages their own moral character, also reveals defects in virtue and weakens empathy toward others. |

| Robot Operator | May feel emotional harm if attached to the robot or if it is mistreated. |

| Robots | Current robots: No consciousness or legal rights, so no direct harm. Future self-aware robots: Could suffer real psychological harm and discrimination, raising urgent ethical concerns. |

| (Source: Barfield, 2023) | |

Reflections on Humans Through Robots

It's pretty clear that the discussion about robots abuse is very similar to all the debates we've been having on animal cruelty or violent video games. just like with these topics it still comes down to this: the way we treat non-human entities is our own mirror and it shows our values and sense of morality.

If we design robots and the interactions with them in a ways that encourages or accepts harmful behaviour, we actually risk reinforcing these behaviours and attitudes in our society and amongst humans. For designers and engineers, this means that we have to pay attention not only to ethics but also the cultural narratives on how robots are understood and accepted around us.

By exploring and giving focus to these topics and possible scenarios, we can have a better understanding on the potential implications of conscious AI and develop perhaps better strategies to add such entities into out society.

Final thoughts? Robots don’t feel pain (yet), but how we treat them might be shaping us more than we realize or want to acknowledge. As AI and robotics continue to evolve, it’s probably worth asking what kind of relationships and interactions do we want to normalize in our society?

AlQallaf, A., & Aragon-Camarasa, G. (2021). Enabling the sense of self in a dual-arm robot. 2021 IEEE International Conference on Development and Learning (ICDL), 1–7. https://doi.org/10.1109/ICDL49984.2021.9515649

Barfield, J. K. (2023). Discrimination against robots: Discussing the ethics of social interactions and who is harmed. Paladyn, Journal of Behavioral Robotics. https://doi.org/10.1515/pjbr-2022-0113

Carlson, Z., Lemmon, L., Higgins, M., Frank, D., & Feil-Seifer, D. (2017). This robot stinks! Differences between perceived mistreatment of robot and computer partners. arXiv. https://arxiv.org/abs/1711.00561

Malinowska, J. K. (2021). Can I feel your pain? The biological and socio-cognitive factors shaping people’s empathy with social robots. Palgrave Communications, 8(1), 1–13. https://doi.org/10.1057/s41599-021-00827-0

Morgante, E., Susinna, C., Culicetto, L., Quartarone, A., & Lo Buono, V. (2024). Is it possible for people to develop a sense of empathy toward humanoid robots and establish meaningful relationships with them?. Frontiers in psychology, 15, 1391832. https://doi.org/10.3389/fpsyg.2024.1391832

Schmetkamp, S. (2020). Understanding A.I. — Can and Should we Empathize with Robots? Review of Philosophy and Psychology, 11, 881–897. https://folia.unifr.ch/unifr/documents/309488 1